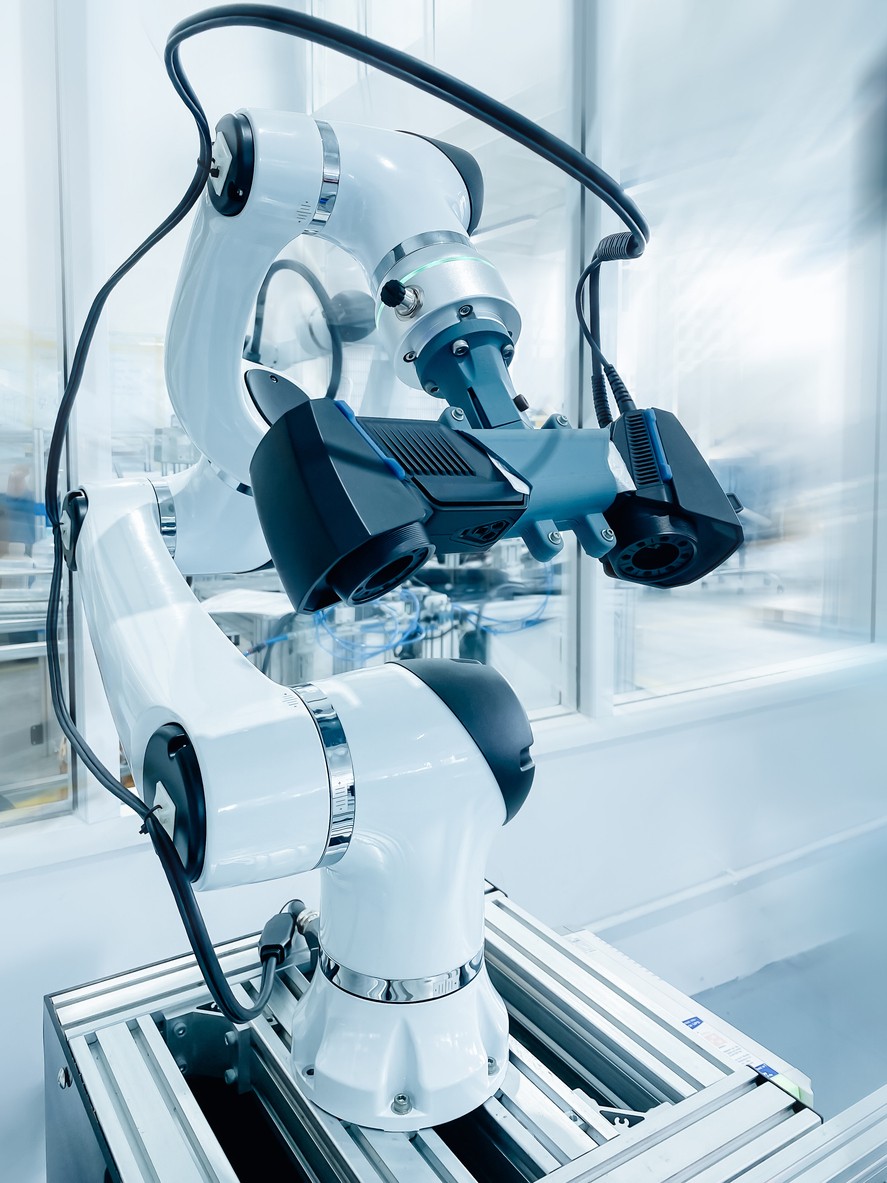

Machine vision gives autonomy to robots

It’s a fact. Giving vision to robots isn’t just about Pick and Place.

A certain level of autonomy can be achieved for specific industrial tasks. Take a recent robotic polishing application as an example. The requirement was for a robot cell that could receive parts to be polished – any part, within a specific range of sizes. The catch was that the robot/vision system had no data about the parts – no understanding of its shape.

This project required two robots and a turntable: One robot for handling the parts and the other robot for moving the polishing head across 100% of the surface area of the part to be cleaned.

The enabler is the 3D camera that images the parts as they are rotated on the turntable, removing the problem of occlusion. The handling robot will also turn the part over, so both sides can be imaged.

The project in detail

This project was situated within the field of robotics and autonomous systems, with significant reliance on advancements in computer vision, particularly 3D imaging, algorithmic surface processing, and robotic control. The work intersects with technologies typically applied in industrial automation, but its application here was highly specialised for the automotive sector. The integration of polishing end-effector, autonomous machine vision, and robotic manipulation aimed to resolve a specific challenge faced in automotive manufacturing – negating the need to pre-program the robot with product data.

At the commencement of the project, there were several individual technologies available that were relevant but not integrated to meet the project’s needs. Structured light 3D cameras capable of capturing high-fidelity surface data were commercially available and already in use across various industries. Robotic arms with accurate and repeatable motion capabilities were also well-established. In addition, end-effector tools capable of precisely polishing micron-level material from surfaces had been developed for industrial cleaning or manufacturing use cases.

However, these technologies functioned in isolation. There was no system that could autonomously image an irregularly shaped object from multiple angles, reconstruct a complete and coherent 3D model, intelligently plan a polishing path based on that model, and then execute the task via robotic motion—all without human intervention. The use of point cloud alignment algorithms like Iterative Closest Point (ICP) was known, but they had not been robustly applied in dynamic robotic environments where vision data needed to inform complex polishing actions in real time.

The project set out to achieve a complete and autonomous solution that would polish 100% of an object’s surface, regardless of its geometry, without requiring any manual mapping or robot programming for each individual tool. This required moving beyond the traditional limitations of pre-programmed robotic movements or human-led imaging and surface analysis.

The core innovation targeted was a software-driven system that could make autonomous decisions by analysing complex 3D shapes and generating a polishing path that accounted for curvature, angles, and occlusions. The intention was to create a fully autonomous workflow that began with object imaging and ended with robotic laser polishing, all within a controlled robotic cell. The solution needed to handle a diverse range of automotive parts, many of which are irregularly shaped.

Our development team encountered several key scientific and technological uncertainties throughout the course of the project. One of the foremost challenges was determining whether a complete and uninterrupted 3D model of each object could be constructed using structured light imaging, given the potential for shadows and occlusions when imaging complex geometries. The fidelity of this model was essential, as any gaps in the data could compromise the accuracy of the polishing path and result in incomplete polishing.

Another area of uncertainty involved the fusion of multiple point clouds collected from different angles. The integration of these datasets into a unified, coherent 3D mesh was not trivial, particularly when the object had to be manipulated and rotated to expose all surfaces. The team needed to determine whether open-source alignment methods like ICP could provide sufficient precision in this context, or whether new adaptations would be necessary.

In addition, the project faced uncertainty in generating an intelligent and efficient path for the polishing end-effector. This was not simply a case of tracing a fixed route; the system needed to segment the 3D surface logically and determine the most effective orientation and direction of polishing for each region. Finally, there was technical uncertainty in translating these paths—calculated in the vision system’s coordinate space—into robot movements that would execute the cleaning process with sufficient accuracy and repeatability.

To address these challenges, the team at Cortha developed a range of new software modules and algorithms to orchestrate the imaging, planning, and execution phases of the project. A custom interface was built to allow full control of the 3D camera, enabling it to extract both point cloud and RGB data under programmable conditions.

Robotic automation was employed to manipulate the object under the camera, ensuring full surface coverage. A two-robot configuration was used: one for handling and orienting the object for imaging, and the second for managing the polishing process.

In the data processing stage, a tailored implementation of the ICP algorithm was used to align and merge point clouds from multiple views. This enabled the system to generate a complete 3D mesh of each object. The mesh was then converted into a flattened representation suitable for path planning. The software included logic to divide the surface into manageable regions and assign directional paths to each based on their geometry.

Once paths were generated, these were translated into robot-compatible coordinates and fed into the polishing control software. Each step of this process required iterative testing and refinement to ensure that transitions between data capture, planning, and execution were lossless and precise. The result was a complete autonomous robotic workflow, capable of polishing irregular shaped objects.